R1

Open Source Statistics with R

Introduction

R is a mature, open-source (i.e. free!) statistics package, with an intuitive interface, excellent graphics and a vibrant community constantly adding new methods for the statistical investigation of your data to the library of packages available.

The goal of this tutorial is to introduce you to the R package, and not to be an introductory course in statistics.

Some excellent examples of using R can also be found at: http://msenux.redwoods.edu/math/R/ and http://www.r-tutor.com/

Getting Started

The very simplest thing we can do with R is to perform some arithmetic at the command prompt:

> phi <- (1+sqrt(5))/2

> phi

[1] 1.618034Parentheses are used to modify the usual order of precedence of the operators (/ will typically be evaluated before +). Note the [1] accompanying the returned value. All numbers entered at the console are interpreted as a vector. The '[1]' indicates that the line in question is displaying the vector of values starting at first index. We can use the handy sequence function to create a vector containing more than a single element:

> odds <- seq(from=1, to=67, by=2)

> odds

[1] 1 3 5 7 9 11 13 15 17 19 21 23 25 27 29 31 33 35 37 39 41 43 45 47 49

[26] 51 53 55 57 59 61 63 65 67From the above example, we can see that both the <- and = operators can be used for assignment.

Vectors are commonly used data structures in R:

coords.bris <- c(51.5, 2.6)As are matrices:

> magic <- matrix(data=c(2,7,6,9,5,1,4,3,8),nrow=3,ncol=3)

> magic

[,1] [,2] [,3]

[1,] 2 9 4

[2,] 7 5 3

[3,] 6 1 8Where the c function combines the arguments given in the parentheses. We can access portions of the array using the syntax shown in the square brackets. For example, we can access the first row using the [1,] notation, and similarly the second column using [,2]. Since the square is 3x3 magic, the numbers in both slices should sum to 15:

> sum(magic[1,])

[1] 15

> sum(magic[,2])

[1] 15Single elements and ranges can also accessed:

> magic[2,2]

[1] 5

> magic[2:3,2:3]

[,1] [,2]

[1,] 5 3

[2,] 1 8R also provides arrays, which have more than two dimensions, and lists to hold heterogeneous collections.

A very commonly used data structure is the data frame, which R uses to store tabular data. Given several vectors of equal length, we can collate them into a data frame:

> country <- c("USA", "China", "GB")

> gold <- c(46, 38, 29)

> silver <- c(29, 27, 17)

> bronze <- c(29, 23, 19)

> medals.2012 <- data.frame(country, gold, silver, bronze)

> medals.2012

country gold silver bronze

1 USA 46 29 29

2 China 38 27 23

3 GB 29 17 19Standard Graphics: A taster

An aspect which makes R popular are it's graphing functions. R also has some very handy built-in data sets--we'll use this to demonstrate just a small fraction of R's graphing abilities.

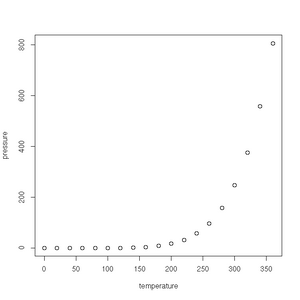

First up is the humble plot() function. Given a data frame of points, such as one charting the relationship between temperature and the vapour pressure of mercury, it will give us a (handily labelled) scatter plot:

> plot(pressure)See the gallery below for all the plots created in this section.

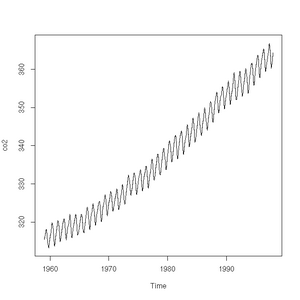

The plot function will also accept a time-series (another class of object recognised by R) and will sensibly join the points with a line:

> plot(co2)

> class(co2)

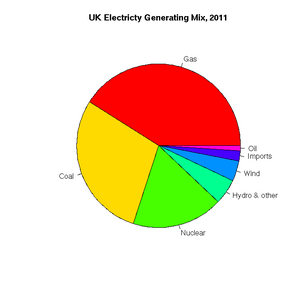

[1] "ts"Pie charts are easily constructed. In this case, to show the relative proportions of electricity generated from different sources in the UK in 2011 (source: https://www.gov.uk/government/.../5942-uk-energy-in-brief-2012.pdf):

> uk.electricty.sources.2011 <- c(41,29,18,5,4,2,1)

> names(uk.electricty.sources.2011) <- ("Gas", "Coal", "Nuclear", "Hydro & other", "Wind", "Imports", "Oil")

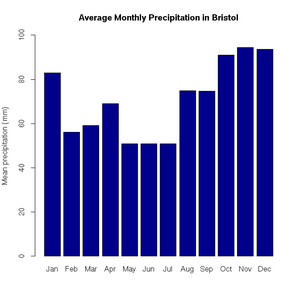

> pie(uk.electricty.sources.2011, main="UK Electricty Generating Mix, 2011", col=rainbow(7))Next, let's create a bar chart of monthly average precipitation falling here in the fair city of Bristol (source: http://www.worldweatheronline.com):

> bristol.precip <- c(82.9, 56.1, 59.2, 69, 50.8, 50.9, 50.8, 74.8, 74.7, 91.1, 94.5, 93.6)

> names(bristol.precip) <- c("Jan", "Feb", "Mar", "Apr", "May", "Jun", "Jul", "Aug", "Sep", "Oct", "Nov", "Dec")

> barplot(bristol.precip,

+ main="Average Monthly Precipitation in Bristol",

+ ylab="Mean precipitation (mm)",

+ ylim=c(0,100),

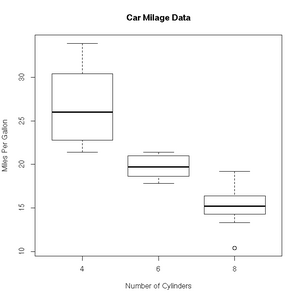

+ col=c("darkblue"))'Box and whisker' plots are useful ways to graph the quartiles of some data. In this case, the fuel efficiencies of various US cars, circa 1974:

> boxplot(mpg~cyl,data=mtcars, main="Car Milage Data",

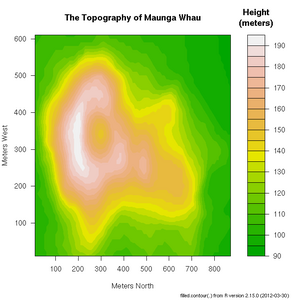

+ xlab="Number of Cylinders", ylab="Miles Per Gallon")R includes a very useful help facility. In the case of the filled.contour() plotting function, the help page includes an example of it's use to plot the topology of a volcano in Auckland, NZ:

> ?filled.countourReading Data from File

R provides some very useful functions for reading and writing data from/to file.

Text Files

Let's start with text files. If your data is organised into a file such that it looks like a table with column headings:

Perhaps the simplest one is read.table(). If I have a text file with the following contents:

country gold silver bronze "USA" 46 29 29 "China" 38 27 23 "Great Britain" 29 17 19 "Russian Federation" 24 26 32 "Republic of Korea" 13 8 7 "Germany" 11 19 14

It will be a simple matter to use the read.table() function to load the data into R:

> medals.2012 <- read.table("medals.txt", header=TRUE)

> medals.2012

country gold silver bronze

1 USA 46 29 29

2 China 38 27 23

3 Great Britain 29 17 19

4 Russian Federation 24 26 32

5 Republic of Korea 13 8 7

6 Germany 11 19 14There is a corresponding write.table() function to export the contents of a data frame into a text file.

CSV files can be easily handled by specifying sep="," as an argument to read.table(). However, for convenience, there are also read.csv() and write.csv() functions defined. For example:

> write.csv(medals.2012,"medals.csv")Gives us the file, medals.csv, with the contents:

"","country","gold","silver","bronze" "1","USA",46,29,29 "2","China",38,27,23 "3","Great Britain",29,17,19 "4","Russian Federation",24,26,32 "5","Republic of Korea",13,8,7 "6","Germany",11,19,14

Binary Files

The save() function will store an R data structure in binary form:

> save(medals.2012,file="medals.RData")gethin@gethin-desktop:~$ file medals.RData medals.RData: gzip compressed data, from Unix

There is, of course, a corresponding function to load such data:

> load("medals.RData")Databases

If you would like to read and write data directly from/to a database, there are several packages to help you. See http://cran.r-project.org/doc/manuals/r-release/R-data.html#Relational-databases for more information.

Functions

Packages

Listed at http://cran.r-project.org/

Let's install the multicore package, that will give us access to functions within R which will run on the multiple processors which we often find in our computers these days:

> install.packages("multicore")Et voila! It is done.

We can check which packages are currently loaded into the library available from our workspace:

> library()If we need to add one, we type e.g.:

> library(multicore)Now, an example of using a function from the multicore package. The lapply function, which is included in the standard R core, will map a given function over a list inputs, giving a list of the function outputs in return. For example, we can map a squaring function over the list of integers from 1 to 3:

> lapply(1:3, function(x) x^2)which gives us the list:

[[1]] [1] 1 [[2]] [1] 4 [[3]] [1] 9

Now, we can do the same work in parallel using:

> mclapply(1:3, function(x) x^2)Examples of Common Tasks

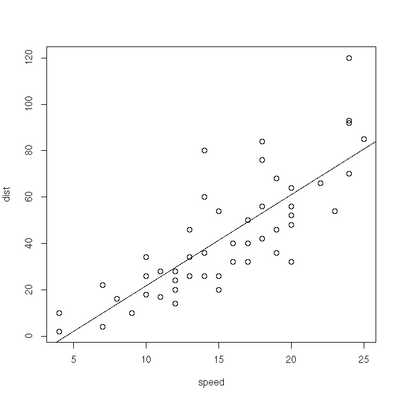

Linear Regression

> plot(cars)

> res=lm(dist ~ speed, data=cars)

> abline(res)Exercise

- Weighted least squares. The lm function will accept a vector of weights, lm(... weights=...). If given, the function will optimise the line of best fit according a the equation of weighted least squares. Experiment with different linear model fits, given different weighting vectors. Some handy hints for creating a vector of weights:

- w1<-rep(0.1,50) will give you a vector, length 50, where each element has a value of 0.1. W1[1]<-10 will give the first element of the vector a value of 10.

- w2<-seq(from=0.02, to=1.0, by=0.02) provides a vector containing a sequence of values from 0.02 to 1.0 in steps of 0.02 (handily, again 50 in total).

Significance Testing

> boys_2=c(90.2, 91.4, 86.4, 87.6, 86.7, 88.1, 82.2, 83.8, 91, 87.4)

> girls_2=c(83.8, 86.2, 85.1, 88.6, 83, 88.9, 89.7, 81.3, 88.7, 88.4)

> res=var.test(boys_2,girls_2)

> res

F test to compare two variances

data: boys_2 and girls_2

F = 1.0186, num df = 9, denom df = 9, p-value = 0.9786

alternative hypothesis: true ratio of variances is not equal to 1

95 percent confidence interval:

0.2529956 4.1007126

sample estimates:

ratio of variances

1.018559

> res=t.test(boys_2, girls_2, var.equal=TRUE, paired=FALSE)

> res

Two Sample t-test

data: boys_2 and girls_2

t = 0.8429, df = 18, p-value = 0.4103

alternative hypothesis: true difference in means is not equal to 0

95 percent confidence interval:

-1.656675 3.876675

sample estimates:

mean of x mean of y

87.48 86.3R and HPC

Parallel Package

R code to show the use of mclapply:

library(parallel)

library(MASS)

RNGkind("L'Ecuyer-CMRG")

mc.cores <- detectCores()

mc.cores

results <- mclapply(rep(25, 4),

function(nstart) kmeans(Boston, 4, nstart=nstart),

mc.cores=mc.cores)

i <- sapply(results, function(result) result$tot.withinss)

result <- results[[which.min(i)]]

resultSubmission script:

#!/bin/bash

#!

#! Sample PBS file

#!

#! Name of job

#PBS -N Parallel-R

#PBS -o Submission.out

#! Number of nodes (in this case 1 node with 8 CPU cores)

#! The total number of nodes passed to mpirun will be nodes*ppn

#! Second entry: Total amount of wall-clock time (true time).

#! 02:00:00 indicates 02 hours

#PBS -l nodes=1:ppn=8,walltime=00:00:05

#! application name

application="R"

#! Run options for the application

options="CMD BATCH parallel-mclapply.r"

#! Ensure that we have the correct version of R loaded

module add languages/R-2.15.1

###############################################################

### You should not have to change anything below this line ####

###############################################################

#! change the working directory (default is home directory)

cd $PBS_O_WORKDIR

#! General info, useful for debugging

echo Running on host `hostname`

echo Time is `date`

echo Directory is `pwd`

echo PBS job ID is $PBS_JOBID

echo This jobs runs on the following machines:

echo `cat $PBS_NODEFILE | uniq`

#! Create a machine file (used for multi-node jobs)

cat $PBS_NODEFILE > machine.file.$PBS_JOBID

numnodes=`wc $PBS_NODEFILE | awk '{ print $1 }'`

#! Run the R script

$application $option